What is this survey about?

Positioning confidence not just as an estimation target, but as a control primitive for building reliable LLM systems.

Most work on confidence in large language models has focused on estimation, uncertainty quantification, and calibration. In deployed systems, however, the key question is how confidence should be used to govern behavior. This survey studies confidence utilization: the use of confidence-related signals to control system decisions. We formalize this through a unified framework in which confidence is defined over decision units under a local state and then consumed by a policy to determine actions.

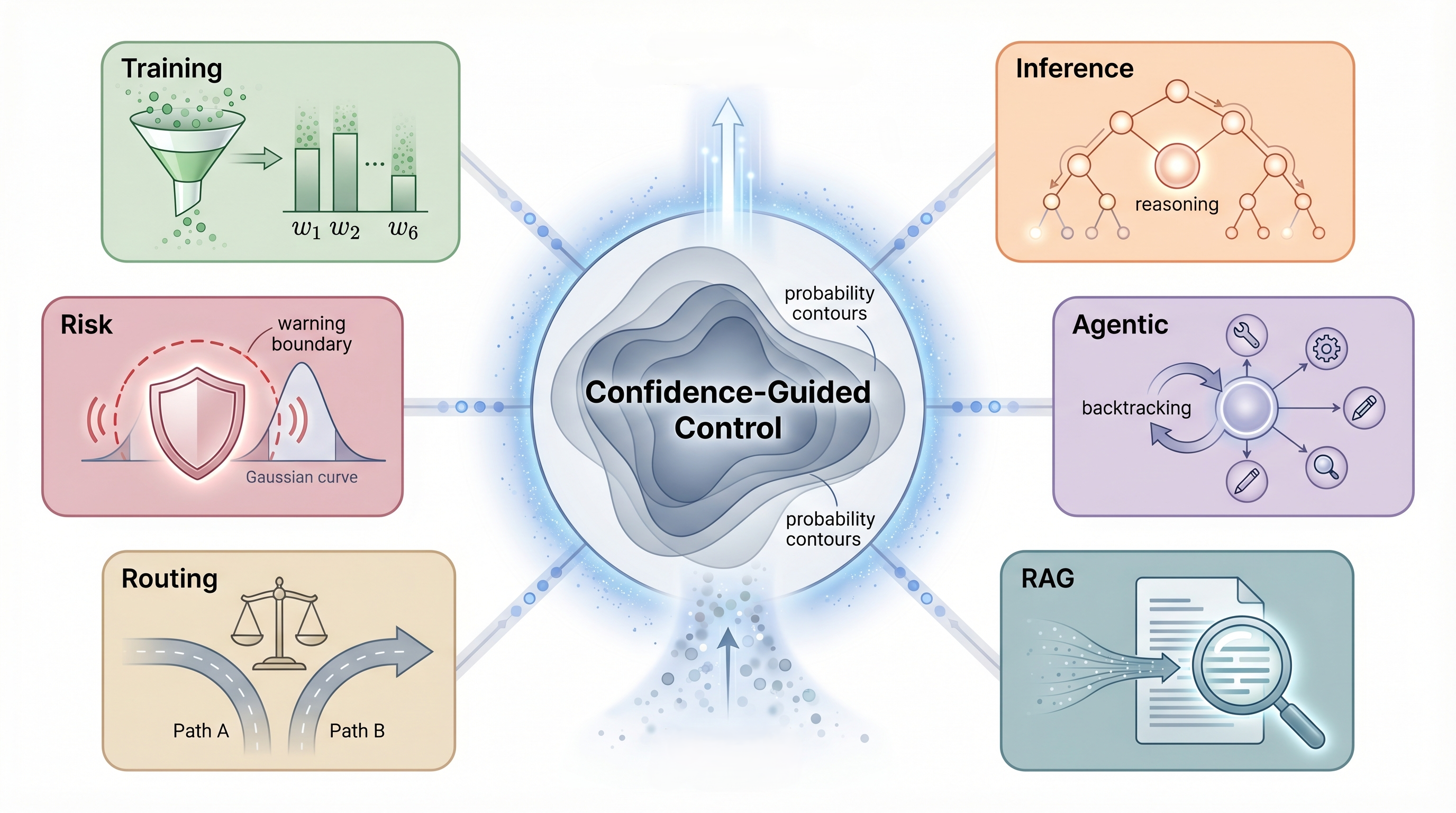

Using this lens, we organize the literature across the full LLM lifecycle: training, inference, model selection and cascading, retrieval-augmented generation, risk management, and agentic control. We compare methods by signal source, decision unit, and functional role, and highlight open challenges in confidence semantics, composition, source attribution, decision-aware evaluation, and robustness.