Overview

Confidence is used broadly across LLM systems in many forms: probability-like estimates, uncertainty scores, log-probability quantities, verbalized confidence, sample-agreement metrics, semantic uncertainty, verifier/judge/reward-model scores, or hybrids combining multiple sources. Despite this diversity, these signals share a common operational feature: each must affect a downstream decision to be useful in control.

This framework abstracts away the surface-level differences and focuses on the functional role of confidence as a control signal that transforms decision states and shapes which actions are taken.

Decision State & Units

At any step t in a generative process, we have a decision state that captures the context:

Given this state, we must choose among discrete decision units Ut = {u1, u2, …, un}. The unit granularity varies by application:

- token — next word in decoding

- span — consecutive tokens or phrases

- claim — atomic fact or statement

- chunk — passage or document segment

- example — training or demonstration instance

- response — complete answer or turn

- model/tool — expert or module to invoke

- step — action in a trajectory or plan

- trajectory — complete sequence of actions

- vote — aggregate choice in multi-sample voting

Confidence Signal

A confidence signal is any reliability-relevant score or distribution produced by the model or auxiliary system. In its most general form:

This signal is a vector (possibly m = 1) that quantifies how much to trust, weight, or pursue each decision unit u given the current state ξt. Sources include:

- Self — token log-probabilities, hidden states, verbalized uncertainty

- Sample-based — agreement across multiple samples, semantic entropy, consistency scores

- Auxiliary — verifier scores, reward-model outputs, router predictions, judge decisions

- External — retrieval relevance, tool execution success, environment feedback

- Hybrid — combinations of the above

Decision Process

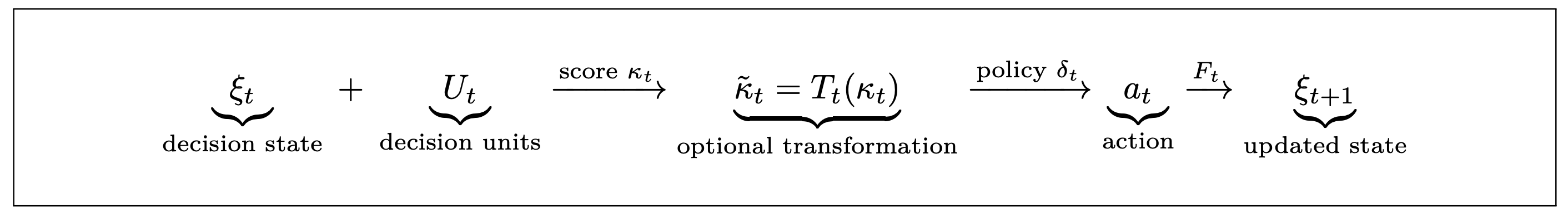

The pipeline from state to action flows through four stages:

Key steps:

- Transform T̃t: Normalize, calibrate, or combine confidence signals. Examples: softmax (for selection), clipping (for filtering), weighted averaging (for fusion).

- Policy δt: Map transformed scores to a decision rule. Examples: argmax (select highest), threshold (filter below cutoff), softmax-sample (probabilistic), or defer (abstain).

- Action at: Execute the policy to commit to one unit or a set of units.

- Updated state ξt+1: Incorporates action outcome and feeds forward.

Three Axes of Variation

Confidence systems vary along three independent dimensions. Understanding these axes helps map papers and design new methods systematically.

Axis 1: Source

- Self: Token probabilities, hidden states, verbalized estimates

- Sample-based: Disagreement, consistency, semantic entropy across samples

- Auxiliary: Verifier, reward model, router, judge, ensemble

- External: Retrieval, tools, environment, human labels

- Hybrid: Multiple sources combined or competing

Axis 2: Unit / Granularity

- Token: Single word in decoding

- Local: Phrase, claim, chunk, sentence

- Item/Candidate: Complete response or solution

- Model/Tool/Agent: Which expert to invoke

- Step: Individual action in a trajectory

- Trajectory/Episode: Full sequence of decisions

Axis 3: Functional Role

- Selection: Choose best among candidates

- Weighting: Probabilistic or aggregate combination

- Allocation: Distribute budget (compute, samples, context)

- Control-flow: Decide whether to continue, stop, revise, or escalate

- Aggregation: Combine outputs from multiple sources or agents

- Learning signal: Supervise or weight training data

Lifecycle: From Training to Deployment

This framework specializes across the six domains covered in the survey:

- Training (§3): Confidence selects training examples and weights gradients. Sources: self-confidence (answer likelihood), auxiliary (judge/verifier), hybrid (uncertainty + influence). Units: examples, tokens, preferences. Role: learning signal allocation and supervision quality control.

- Inference (§4): Confidence shapes next-token distribution, when to stop generation, and which candidate to select. Sources: self (logprobs, verbalized, semantic entropy), auxiliary (PRM). Units: tokens, answers, steps. Role: decoding control, stopping, output selection.

- Routing (§5): Confidence predicts query suitability (pre-call) or answer quality (post-hoc) for cascading or model selection. Sources: auxiliary (routers), self (calibrated), hybrid (score gap). Units: queries, answers. Role: deferral, escalation, portfolio orchestration.

- RAG (§6): Confidence determines whether to retrieve, what context to keep, and whether to abstain. Sources: self (low-token confidence triggers retrieval), auxiliary (retrieval quality), hybrid (consistency + relevance). Units: tokens/sentences (trigger), documents/passages (filter), queries (routing). Role: retrieval triggering, context filtering, groundedness checking.

- Risk (§7): Confidence detects hallucinations, calibrates predictions, and enables conformal guarantees and abstention. Sources: self (semantic entropy, self-check), auxiliary (probes, verifiers), hybrid (calibrated predictor). Units: claims, answers. Role: hallucination detection, coverage guarantees, reliability certification.

- Agentic (§8): Confidence guides search (MCTS with PRMs), triggers escalation and backtracking, aggregates multi-agent votes. Sources: auxiliary (verifiers, rewards), peer (voting), self (intrinsic verification). Units: steps, trajectories, responses. Role: search guidance, control flow, consensus and escalation.